Multi-Agent in Production 2026: 3 Patterns That Survived

TL;DR: The 2024 hype that “more agents = more intelligence” failed in production. Five major vendors (Anthropic, OpenAI, AutoGen, Cognition, LangChain) converged on orchestrator+isolated-subagents as the default architecture. Peer-collaboration “GroupChat” patterns lost ground. Three patterns survived — agent-flow (assembly line), orchestration (hub-and-spoke), and bounded collaboration (controlled peer mesh). This article covers the research, the cost reality (15× token overhead of multi-agent vs chat), and a decision framework for your next project.

The Multi-Agent Wake-Up Call

In 2024, the industry believed adding more agents meant more intelligence. By mid-2026, production data told a different story.

The evidence is brutal:

- Multi-agent systems use 15× more tokens than chat interactions — and token usage explains 80% of performance variance (Tran & Kiela, arXiv 2604.02460)

- Single-agent systems consistently match or outperform multi-agent systems on multi-hop reasoning tasks when reasoning tokens are held constant

- The “From Spark to Fire” cascade paper (2026) found that a single atomic falsehood can infect 100% of agents in hub-and-spoke topologies (LangGraph: 100% system-wide failure on hub injection)

- MIT’s Simchi-Levi et al. proved: “Without new exogenous signals, any delegated acyclic network is decision-theoretically dominated by a centralized Bayes decision maker”

The $75,000/day bill from runaway agent loops (at 50¢/execution × 500K requests) convinced teams that architecture decisions aren’t theoretical — they’re budget decisions.

“An orchestration pattern that works beautifully at 100 requests per minute can completely fall apart at 10,000.” — MachineLearningMastery, 2026

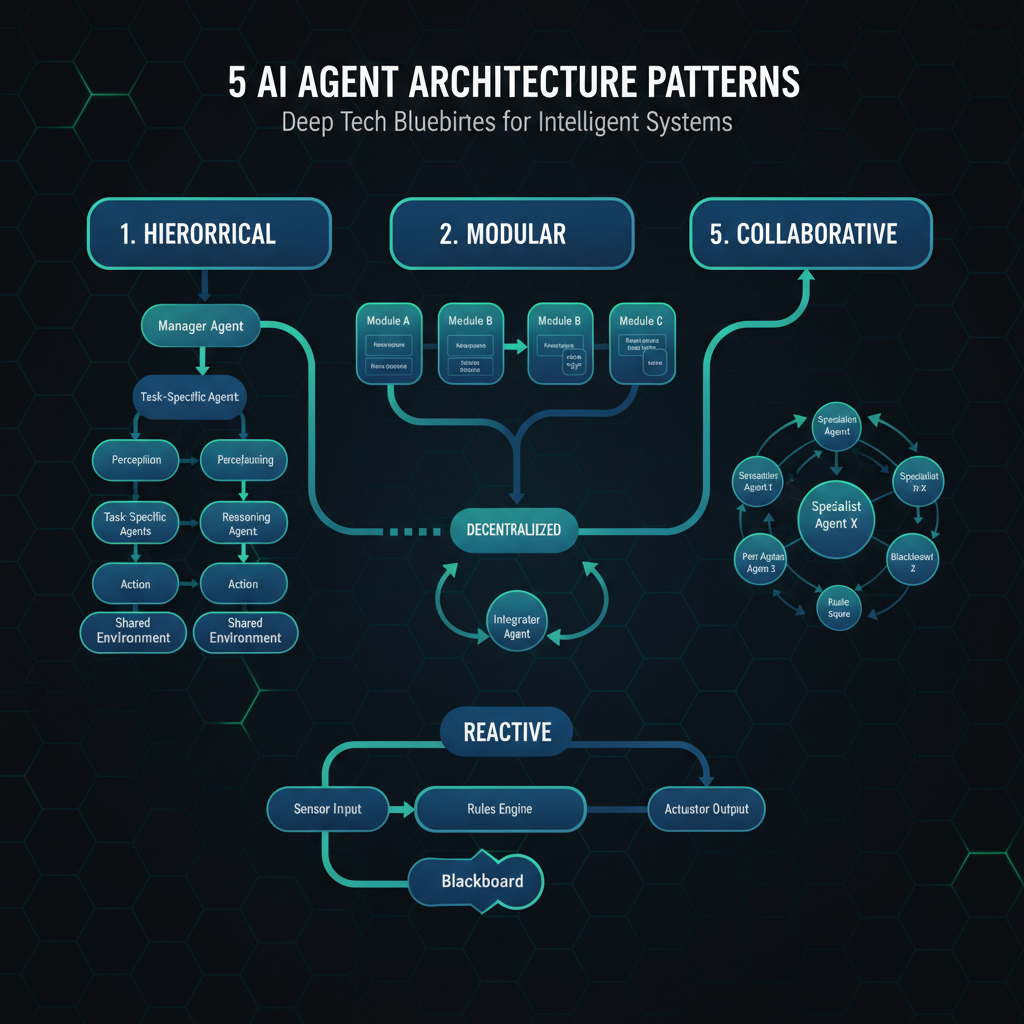

Three Patterns — Only Three Survived

After analyzing 5 frameworks across 150+ tasks, researchers identified 14 failure modes in 3 categories. Most were structural — not fixable with better prompts. Three patterns endured:

1. Agent-Flow (Assembly Line)

Work flows through stages in sequence, each stage producing intermediate artifacts.

| Aspect | Detail |

|---|---|

| Analogy | Factory assembly line |

| Best for | Natural stage boundaries, explicit artifacts, strong traceability |

| Failure mode | Early errors poison downstream — verification arrives after contextual debt |

| Mitigation | Intermediate-artifact schemas + per-stage evaluators |

| Observability | Highest |

| Token cost | Moderate |

| Blame assignment | Easy |

When to use: Your task has clear sequential stages (research → outline → write → review), each producing a tangible intermediate output.

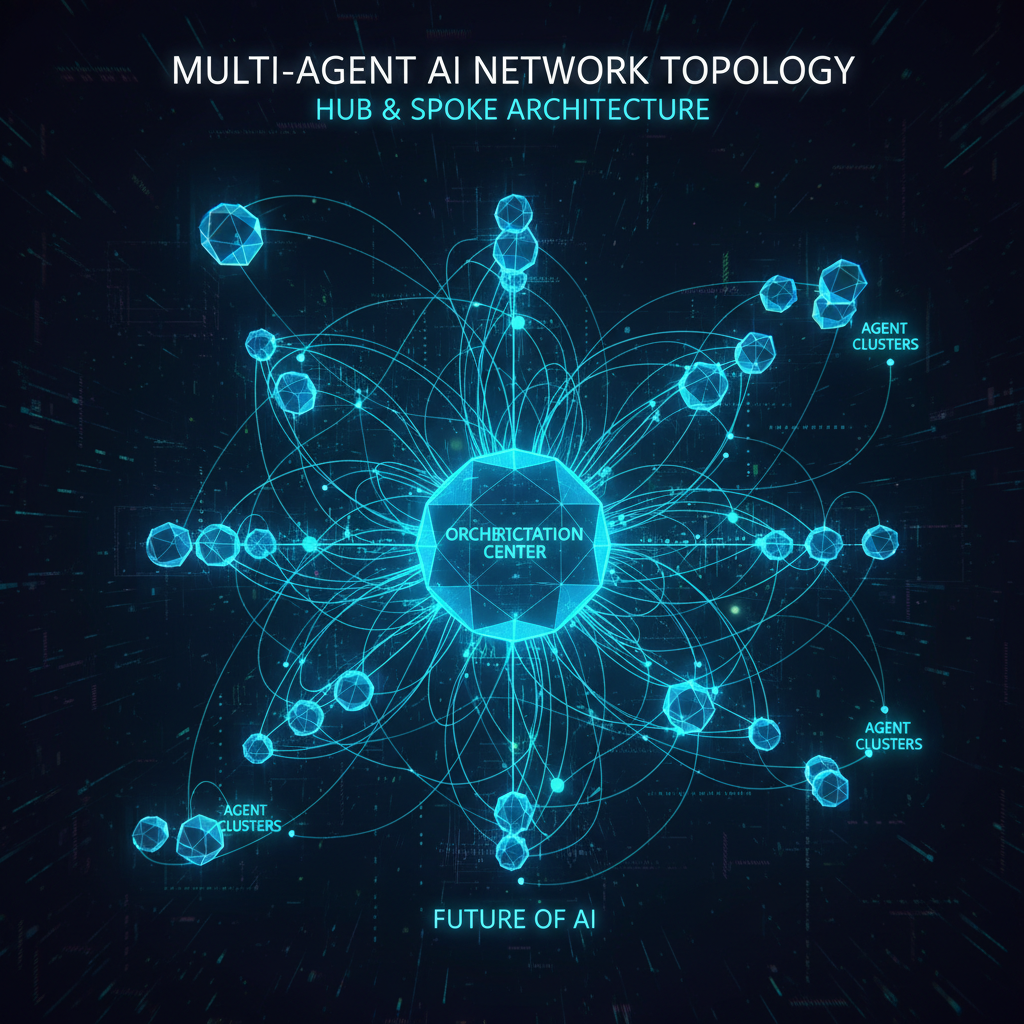

2. Orchestration (Hub-and-Spoke)

A single orchestrator owns full conversation context, spawning ephemeral isolated subagents that return compressed summaries. No peer-to-peer communication.

| Aspect | Detail |

|---|---|

| Analogy | Franchise / command hierarchy |

| Best for | Domain routing, compliance boundaries, wide-but-modular tasks |

| Failure mode | Hub fragility (one bad routing cascades) + translation loss at center |

| Mitigation | Governance layer (pushes defense from 0.32 → >0.89) |

| Observability | High |

| Token cost | High (15× chat) |

| Blame assignment | Moderate |

This is the default pattern in 2026. Five major vendors converged here:

- Cognition: “Don’t Build Multi-Agents” (June 2025) → shipped “Devin can Manage Devins” (March 2026)

- Anthropic: “brain/hands” architecture with role-scoped subagents (April 2026)

- OpenAI: Agents SDK update made nested handoff history opt-in (April 15, 2026)

- AutoGen: merged into Microsoft Agent Framework 1.0 — peer GroupChat no longer flagship

- LangChain: supervisor-as-tool over supervisor library

When to use: You need domain isolation, compliance boundaries, or parallel independent research queries.

3. Bounded Collaboration (Controlled Peer Mesh)

Peers coordinate via shared workspace with explicit phase gates, hidden selectors, and a final arbiter. Free mesh survived only as a controlled subroutine inside a supervisor.

| Aspect | Detail |

|---|---|

| Analogy | Sports team with a coach |

| Best for | Narrow-domain reliability, disjoint tool/context domains |

| Failure mode | Consensus inertia, message explosion, steep communication tax |

| Mitigation | Phase gates, shared artifacts, arbitration layer |

| Observability | Lowest |

| Token cost | Highest |

| Blame assignment | Hard |

When to use: Drammeh’s incident-response paper (348 controlled trials) showed the strongest case: 100% actionable recommendation rate vs 1.7% for single-agent, with 80× action specificity and zero quality variance. This pattern wins when domain isolation is a hard requirement.

When NOT to build multi-agent at all

“Under a fixed reasoning-token budget and with perfect context utilization, single-agent systems are more information-efficient.” — Tran & Kiela, arXiv 2604.02460

Not recommended for: sequential tasks, shared-state work, or anything resembling “do these steps in order with judgment between them.” The literature recommends a single agent with disciplined context management.

The Subagent Contract

Every surviving implementation uses the P2 prompt pattern — a structured contract between orchestrator and subagent:

Each subagent needs:

1. An objective

2. An output format

3. Guidance on tools and sources to use

4. Clear task boundariesThree rules (validated across 2025–2026 production deployments):

- Dedicated system prompt — never reuse the orchestrator’s prompt. Subagents need role-scoped context.

- First user message is the structured brief — objective, format, tools, boundaries. Free-form delegations are a documented failure mode.

- Return a summary string, not a transcript — inlining the full transcript pollutes context and burns tokens at 15× the rate.

Rule 4 (often missed): Forward worker output directly to the user when the supervisor’s only job is to deliver it. ~50% of swarm-vs-supervisor performance gain comes from this single change.

Copy-Paste: Orchestrator Template

Here’s a production-ready orchestrator pattern using LangGraph’s supervisor/subagent framework:

from typing import Annotated, Literal

from langgraph.graph import StateGraph, MessagesState

from langgraph.types import Command

import json

class AgentState(MessagesState):

"""Typed state for orchestrator + subagents."""

objective: str

subagent_results: list[dict]

final_answer: str

def orchestrator(state: AgentState) -> Command[Literal["researcher", "writer", "reviewer", "__end__"]]:

"""Orchestrator routes work to specialists based on state."""

if not state.get("subagent_results"):

return Command(

goto="researcher",

update={"objective": state["messages"][-1].content}

)

# After research comes back, route to writer

if len(state.get("subagent_results", [])) == 1:

return Command(goto="writer")

# After writer, route to reviewer

if len(state.get("subagent_results", [])) == 2:

return Command(goto="reviewer")

return Command(goto="__end__")

def researcher(state: AgentState) -> Command[Literal["orchestrator"]]:

"""Ephemeral subagent: returns summary, not transcript."""

result = {"role": "researcher", "summary": "Research findings..."}

return Command(

goto="orchestrator",

update={"subagent_results": state.get("subagent_results", []) + [result]}

)

# Build graph

builder = StateGraph(AgentState)

builder.add_node("orchestrator", orchestrator)

builder.add_node("researcher", researcher)

builder.add_edge("__start__", "orchestrator")

graph = builder.compile()When to use: Any production system where you need domain-level routing (billing vs support vs compliance) without cross-contamination.

When NOT to use: If your task fits in a single agent’s context window (<128K tokens), start there. Multi-agent is a complexity tax, not a capability upgrade.

Decision Framework

| Your bottleneck | Recommended pattern | Why |

|---|---|---|

| Sequential work with clear stages | Agent-Flow | Highest observability, easiest debugging |

| Domain isolation required | Orchestration | Industry default in 2026, vendor-supported |

| Narrow-domain reliability | Bounded Collaboration | Drammeh results: 100% vs 1.7% |

| Parallel independent research | Orchestration | +16.28% relative improvement (AORCHESTRA) |

| Shared-state reasoning | Single agent | 15× less tokens, same-or-better accuracy |

| Budget constraint | Single agent | $0.15/execution vs $2.25+ for multi-agent |

The Cost Reality

| Pattern | Tokens per request (vs chat) | Cost per 10K runs |

|---|---|---|

| Single agent (chat-like) | 1× | $15–30 |

| Agent-flow | 3–5× | $45–150 |

| Orchestration | 8–15× | $120–450 |

| Bounded collaboration | 15–25× | $225–750 |

Key insight: The 15× cost multiplier means a single-agent system costing $15/day becomes $225/day as multi-agent. Over a month, that’s $450 vs $6,750 — a 15× line-item difference that teams building multi-agent systems often discover only after deployment.

“Billing unpredictability is a major stressor: variable execution paths make cost forecasting genuinely difficult. One edge case can trigger retries costing 50× more than the normal path.” — ML Mastery, 2026

The Bottom Line

Multi-agent systems are not an intelligence upgrade — they’re an architectural choice with specific tradeoffs. The burden of proof is on multi-agent, not single-agent.

- Start single-agent. Add complexity only when you can name the specific bottleneck (domain isolation, parallel research, compliance boundaries).

- If you must go multi-agent, use orchestrator+isolated-subagents. This is where the entire industry converged in 2026. Peer collaboration (GroupChat) failed production.

- Budget for 15× token overhead before you start. The shock comes not from building the system, but from running it.

What NOT to Do

- ❌ Don’t add agents for the sake of architecture sophistication

- ❌ Don’t peer-collaborate without phase gates and arbitration

- ❌ Don’t skip the subagent contract — free-form delegation is a documented failure mode

- ❌ Don’t deploy without cost guards — one runaway loop at 15× overhead erases any performance gain

- ❌ Don’t assume more agents = better results — the evidence shows the opposite

Quick-Start Checklist

Before deploying any multi-agent system, verify each item:

- Can this be a single agent? (Try first — 80% of cases)

- Is domain isolation a genuine requirement?

- Do you have per-agent cost monitoring?

- Does each subagent have a structured brief (objective, format, tools, boundaries)?

- Is there a governance layer for cascade prevention?

- Can you trace every decision path end-to-end?

- Have you tested at 100× target load? (Behavior changes under scale)