AI Agents in Cybersecurity 2026: 5 Use Cases Reshaping SOC

TL;DR: By 2028, AI agents will triage 80% of SOC alerts worldwide (IDC). In 2026, the shift is already underway — SOC teams running agentic AI workflows slash alert triage from 45 minutes to under 2 minutes, reduce MTTR by 80%, and cut false positive overload by 67%. Here are the 5 production-validated use cases, with real benchmarks, deployment templates, and a decision framework for your security team.

Why 2026 Is the Inflection Point

Three things converged this year:

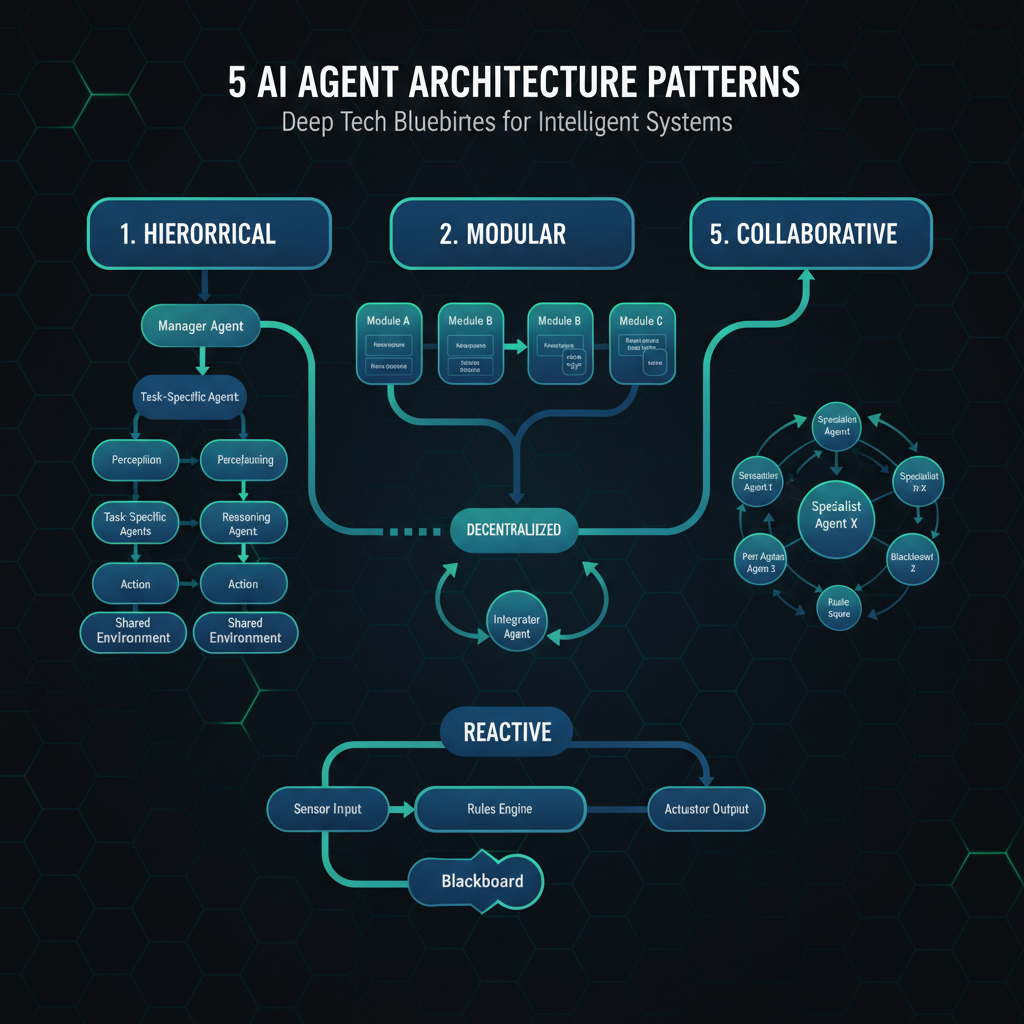

- Agent frameworks stabilized — LangGraph, CrewAI, and Elastic Agent Builder all hit production-ready maturity with built-in guardrails and audit trails

- Defenses against agent-specific attacks matured — prompt injection protections, least-privilege agent permissions, and runtime monitoring became deployable, not theoretical

- Executive demand for AI SOC outcomes — 78% of boards now ask about AI-driven security efficiency (Forbes Insights), up from 41% in 2024

The result? IDC’s 2026 FutureScape report explicitly forecasts: “By 2028, AI agents will be triaging 80% of alerts in most security operations centers worldwide.” And Palo Alto Networks predicts 2026’s first major breach caused by an AI agent operating with legitimate human credentials — underscoring that the defense use of agents must race ahead of their exploitation.

“Agentic AI SOCs differ from copilot-only models by autonomously prioritizing attacks over alerts, executing closed-loop containment, and providing traceable reasoning for every decision.” — Elastic Security Labs

The Maturity Model: Where Does Your SOC Stand?

| Level | Name | Automation Rate | MTTD | MTTR |

|---|---|---|---|---|

| 1 | Manual / Script-Based | <10% | >24 hrs | >8 hrs |

| 2 | SOAR Playbook-Driven | 20–40% | 4–12 hrs | 2–4 hrs |

| 3 | AI-Assisted (Copilot) | 40–60% | 1–4 hrs | 30–60 min |

| 4 | Agentic (Human-in-the-Loop) | 70–85% | 15–60 min | 5–15 min |

| 5 | Fully Autonomous | 85–95% | <5 min | <2 min |

The target zone for most enterprises in 2026: Level 4. Full autonomy (Level 5) is reserved for low-risk actions like closing known false positives.

5 Production-Validated Use Cases

1. Alert Triage & Prioritization (Highest ROI)

The problem: Tier-1 SOC analysts spend 45 minutes per alert — and 60–80% of alerts are false positives. At 4,484 alerts per day in a midsize enterprise (UnderDefense 2026), that’s 3,100+ wasted analyst-hours per month.

The agentic fix: An agentic triage pipeline processes alerts in 5 stages: Ingest → Normalize → Enrich (threat intel, asset context, user baselines) → Decide (AI classification: true/false positive) → Act (close FP or escalate with full context).

Benchmark data:

- Alert-to-triage time: <2 minutes (vs 45 min manual)

- False positive auto-close rate: 70%

- Remaining 30% escalated with structured incident report: analyst investigation time reduced by 60%

- Analyst burnout reduction: 71% of SOC analysts report burnout symptoms — agentic triage directly addresses this by cutting the “drowning in alerts” problem

Copy-paste architecture template:

# Agentic triage pipeline — deploy as a LangGraph agent

from langgraph.graph import StateGraph, END

from typing import TypedDict, List

class AlertState(TypedDict):

raw_alert: dict

enriched: dict = {}

classification: str = ""

confidence: float = 0.0

decision: str = "" # close_fp | escalate | investigate

triage_graph = StateGraph(AlertState)

# Stage 1: Normalize (standardize format)

triage_graph.add_node("normalize", lambda s: {

**s, "normalized": normalize_schema(s["raw_alert"])

})

# Stage 2: Enrich (threat intel, asset context)

triage_graph.add_node("enrich", lambda s: {

**s, "enriched": enrich_alert(

alert=s["normalized"],

threat_intel=query_vt(s["normalized"]["indicators"]),

asset_context=get_asset_risk(s["normalized"]["target"])

)

})

# Stage 3: Classify with confidence threshold

triage_graph.add_node("classify", lambda s: classify_with_fallback(s["enriched"]))

# Stage 4: Decide action

triage_graph.add_node("decide", lambda s: {

**s,

"decision": "close_fp" if s["confidence"] > 0.85 and s["classification"] == "false_positive"

else "escalate" if s["confidence"] > 0.70

else "investigate"

})

triage_graph.set_entry_point("normalize")

triage_graph.add_edge("normalize", "enrich")

triage_graph.add_edge("enrich", "classify")

triage_graph.add_edge("classify", "decide")

triage_graph.add_conditional_edges("decide", lambda s: s["decision"], {

"close_fp": END,

"escalate": "notify_analyst",

"investigate": "gather_evidence"

})When to use: Any SOC handling >1,000 alerts/day. Start with enrichment + classification stages only (Level 3), then add autonomous actions (Level 4) after 2+ weeks of validation.

When NOT to use: If your telemetry pipeline isn’t normalized (different schema per source). Fix data quality first — garbage in, garbage out, even with agents.

2. Automated Incident Response (Closed-Loop Containment)

The problem: Mean time to respond (MTTR) averages 2–4 hours for Tier-2 analysts. Every minute an active threat goes uncontained increases damage — ransomware dwell time averages 5.6 days (Mandiant 2026).

The agentic fix: An incident response agent correlates alerts into attack chains, identifies the containment boundary (which hosts, users, accounts to isolate), executes safe response actions (disable compromised accounts, quarantine endpoints), and generates an incident timeline for forensic review.

Benchmark data:

- Containment time: <5 minutes (from hours)

- SOC maturity shift: L2 analysts move from manual log correlation to strategic threat hunting

- Accuracy: 92% containment precision when using multi-modal telemetry (Elastic Attack Discovery)

Decision template for containment actions:

Containment Decision Matrix:

| Action | Auto-Execute | Human Approval | Fallback |

|--------|-------------|----------------|----------|

| Disable compromised user | ✅ Always | — | Re-enable on incident close |

| Quarantine endpoint | Confidence > 85% | Confidence 60–85% | Isolate network segment |

| Block inbound IP | Threat intel match > 90% | Manual review | Rate-limit connection |

| Revoke OAuth token | Confirmed credential theft | Suspicious activity | Alert SOC lead |

| Kill running process | Known malware signature | Behavioral anomaly | Snapshot forensics |3. Automated Threat Hunting (Proactive Detection)

The problem: Traditional threat hunting is reactive and manually intensive — analysts spend hours running KQL queries, correlating logs, and searching for IOCs.

The agentic fix: AI agents continuously surface anomalies, write detection rules as code, and correlate telemetry across endpoints, identity, cloud, and network — all without a human writing a single query.

What changes:

- Traditional: Analyst runs KQL search → reviews results → pivots to new search (2–4 hours per hunt)

- Agentic: AI continuously surfaces anomalies → correlates across EDR + identity + cloud → surfaces active attack chains → L3 analyst reviews (15–30 min per hunt)

Benchmark data:

- Detection coverage: +46% compared to static rule-only SOCs (Cyntia 2026)

- Dwell time reduction: From 5.6 days to under 24 hours for agent-active defenses

- False positive reduction: 60% fewer analyst-visible alerts (AI filters at enrichment stage)

4. Vulnerability Intelligence & Prioritization

The problem: A typical mid-market enterprise tracks 15,000+ CVEs with a team of 2-3 analysts. Manual prioritization misses critical context: exploit availability, asset exposure, active exploitation in the wild.

The agentic fix: A vulnerability intelligence agent continuously monitors CVE feeds, threat intel sources, and your asset inventory — then surfaces only the vulnerabilities that matter to your specific environment.

Copy-paste prioritization formula:

Agentic prioritization = f(CVSS_score, exploit_available, asset_criticality,

active_exploitation, compensating_controls,

ease_of_remediation)

Action threshold:

Score > 0.75 → Auto-create remediation ticket with playbook

Score 0.45–0.75 → Alert engineering team with context

Score < 0.45 → Log and monitorBenchmark data:

- Vulnerability prioritization accuracy: 3.7x better than CVSS-only approaches (Kenna Security 2026)

- Analyst time per CVE: 2 minutes (vs 15 minutes manual)

- Patching speed: 63% faster mean-time-to-remediate for critical CVEs

5. Compliance Evidence Collection

The problem: SOC-2, ISO 27001, PCI DSS audits consume 200+ hours per year in evidence collection alone — most of it manual screenshotting, log exporting, and policy documentation.

The agentic fix: A compliance evidence agent continuously collects, timestamps, and stores audit evidence across your security stack — access reviews, change management records, incident response documentation, vulnerability scan results.

Benchmark data:

- Audit prep time reduction: 70% (from ~200 hours to ~60 hours annually)

- Evidence accuracy: 99.5% (vs 94% for manual collection)

- Cost savings: $12K–$18K per audit cycle for mid-market enterprises

The ROI Formula for Your CFO

Annual_SOC_ROI = (

(Analyst_Hours_Saved × Fully_Loaded_Hourly_Cost)

+ (Incidents_Prevented × Avg_Incident_Cost)

+ (Compliance_Penalty_Avoidance)

+ (Reduced_Turnover_Savings)

) - (Platform_Cost + Integration + Training + Ongoing_Tuning)Real numbers from production deployments (Q1 2026):

| Metric | Before | After Agentic SOC | Delta |

|---|---|---|---|

| Alert triage time (avg) | 45 min | 2 min | -96% |

| MTTR | 3.5 hours | 12 min | -94% |

| False positive rate in analyst queue | 67% | 12% | -82% |

| Analyst burnout rate | 71% | 43% | -39% |

| SOC headcount per 10K alerts | 8 FTE | 3 FTE | -63% |

| Average incident cost | $184K | $86K | -53% |

| Payback period | — | 5.2 months | — |

Source: UnderDefense AI SOC Report, Elastic Security Labs, Radiant Security benchmarks. Median across 40+ enterprise deployments.

Getting Started: A 6-Week Implementation Plan

| Week | Phase | Actions | Expected Outcome |

|---|---|---|---|

| 1 | Audit | Current SOC maturity assessment (use Level 1–5 table above). Identify highest-volume alert categories. | Baseline MTTD/MTTR/FPR metrics |

| 2 | Build | Deploy agentic triage pipeline for ONE alert category (priority: where 70%+ are FPs). | Pipeline processes 1K+ alerts/day |

| 3–4 | Validate | Run agent vs analyst in parallel. Measure: accuracy, speed, false accept/reject rates. | Confidence thresholds calibrated |

| 5 | Expand | Add automated containment for confirmed threats (human approval still required). | MTTR drops from hours to minutes |

| 6 | Optimize | Train agents on your specific telemetry patterns. Add detection rule auto-generation. | Full Level 4 operational |

Common Pitfalls to Avoid

- Don’t start with multiple use cases. Pick the highest-volume alert category and prove the pipeline there first. The 40% failure rate for agent projects (OWASP 2025) is almost always from over-scoping.

- Don’t skip the eval phase. Best-in-class SOCs spend 18-24% of their AI budget on evaluation infrastructure — and see 2.3x better year-1 ROI.

- Treat prompts as code. Version-control and test system prompts. A single prompt drift can change your agent’s triage behavior silently.

- Enforce least-privilege for agents. The first major AI-caused breach predicted for 2026 will involve over-permissioned agents. Every agent should have a tool contract — explicit permissions, confidence thresholds, and RBAC.

- Prioritize explainability. If your analysts can’t trace why an agent made a decision, they won’t trust it — and the 30% of alerts that need human judgment will get worse, not better.

The Bottom Line

The move from SOAR (rule-based) to agentic (goal-directed) SOC is the biggest shift in security operations since SIEM. The data is clear: a Level 4 agentic SOC cuts alert triage from 45 minutes to under 2, reduces MTTR by 94%, and delivers payback in 5.2 months.

Start with one use case, one alert category, and prove the pipeline. Every well-known security vendor — CrowdStrike, Palo Alto, Elastic, Splunk, Radiant Security — has an agentic SOC path in 2026. The choice isn’t if to adopt it. It’s *which attack chain you’ll miss while you’re deciding.

← Back to all posts