LLM Context in 2026: Long Context vs RAG Decision Guide

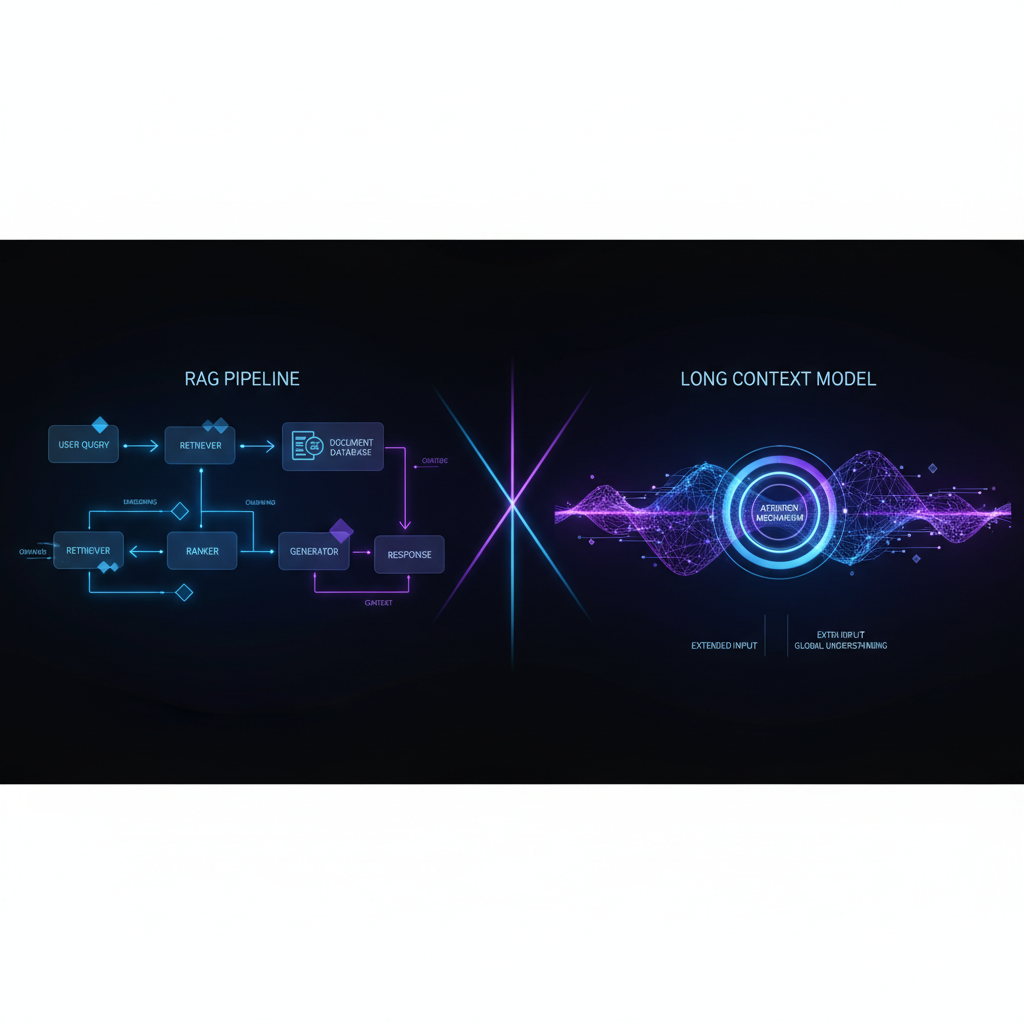

The short version: In 2026, every frontier model ships a 1M-token context window. Gemini 3.1 Pro, GPT-5.5, and Claude Opus 4.7 all claim it. But “can accept 1M tokens” and “can reason over 1M tokens” are two different things. Multi-fact recall in long-context models hovers around 60% — meaning 40% of facts vanish into the middle of your prompt. Meanwhile, a well-tuned RAG pipeline costs 1,250x less per query and completes in under 2 seconds. The winning strategy isn’t picking one — it’s building a routing system that sends each query to the right tool.

The 1M-Token Promise vs Reality

Every major model vendor shipped a 1M+ context window between 2025 and 2026. The promise: dump your entire document corpus into the prompt and the model will find what it needs. The reality is more nuanced.

What the Benchmarks Actually Say

The standard “needle in a haystack” (NIAH) test — hide a single fact somewhere in a long document — looks great on paper. Gemini 1.5 Pro achieved 99.7% recall on NIAH. But the problem is what NIAH doesn’t measure.

| Test | What It Measures | Typical Score (1M context) | Problem |

|---|---|---|---|

| NIAH | Single-fact recall | 99%+ | Only tests 1 needle, high vocab overlap |

| NoLiMa | Multi-fact recall | ~60-70% | Realistic — multiple facts needed |

| NeedleChain | Chained reasoning across positions | ~40-50% | Correlates with actual production failures |

| LongBench v2 | Multi-document QA | Varies by model | Most realistic general benchmark |

The gap between NIAH and realistic benchmarks is where production failures live. GPT-5.5 leads on MRCRv2 (coreference resolution at 128-256K) with a score of 87.5 — the only published score we have for the current top-tier models on realistic long-context evals.

The “Lost in the Middle” Problem (Still Alive in 2026)

The U-shaped attention curve was discovered in 2023 (Liu et al.) and confirmed again in 2026 by the RULER benchmark across 17 long-context models. Facts at the start or end of a prompt return accurately. Facts in the middle vanish by 20+ percentage points.

“Bigger context windows did not fix it. They just gave you more middle to lose things in.” — Gabriel Anhaia, 2026 replication study

This isn’t a bug — it’s a fundamental property of transformer attention. And bigger context windows make it worse because the proportion of “middle” content grows faster than the edges.

The Cost and Latency Reality

Here’s where long-context enthusiasm meets production math.

| Approach | Cost per Query | Latency | Reliability |

|---|---|---|---|

| Long context (100K tokens) | $0.10-$0.25 input | ~5-15s | 60-70% multi-fact recall |

| Long context (1M tokens) | $1.74-$6.75 input | ~30-60s | ~50-60% multi-fact recall |

| RAG pipeline | ~$0.00008 per query | <2s | 80-90% with good reranking |

Source data from Tian Pan’s 2026 production decision framework and BenchLM pricing comparison.

The 1,250x cost gap isn’t a rounding error. At 10,000 queries/day, a RAG pipeline costs $0.80/day vs long-context at $1,000-$6,750/day.

The Quadratic Scaling Trap

Transformer attention scales O(n²). Doubling context quadruples compute. A 1M-token context cache requires roughly 100GB of GPU memory per session. That’s why latency jumps from 5s at 100K tokens to 45s+ at 1M tokens — the model isn’t being lazy, the math is against it.

Caching Changes the Equation

| Model | Full Price /M | Cached Price /M | Savings |

|---|---|---|---|

| Claude Opus 4.7 Adaptive | $5.00 | $0.50 | 90% off |

| DeepSeek V4 Pro | $1.74 | $0.145 | 92% off |

| GPT-5.5 | $5.00 | ~$0.50 (90% cache) | 90% off |

Prompt caching works for repeated reads of the same document — codebase reviews, legal document analysis, recurring reports. It does NOT help for one-shot queries against fresh documents, which is the majority of Q&A workloads.

The Decision Framework: 5 Factors

Here’s a copy-paste framework for deciding whether to use long context, RAG, or a hybrid approach.

Factor 1: Corpus Size

| Size | Recommended Approach | Why |

|---|---|---|

| <100K tokens | Either works | Both fit in reliable context window |

| 100K-500K | Hybrid | RAG for factual lookups, LC for synthesis |

| 500K-1M | RAG first | Only 20% of data is relevant per query |

| >1M | RAG only | No model handles this in one pass |

Factor 2: Relevance Ratio

If 90% of your data is irrelevant to any given question, sending it all in is wasteful and actively harmful. When the relevance ratio drops below ~20%, RAG consistently outperforms full-context stuffing by 13+ F1 points on standard benchmarks (at roughly one-seventh the token budget).

Factor 3: Query Volume

| Queries/month | Context Budget | Winning Strategy |

|---|---|---|

| <100 | Generous | Long context fine, cost negligible |

| 100-10,000 | Moderate | Hybrid routing recommended |

| 10,000+ | Tight | RAG mandatory, LC for exceptions only |

Factor 4: Latency SLO

- Interactive (<2s): RAG is the only path. Long context at 100K+ takes 5-45s.

- Async (acceptable 30-60s): Long context is viable for high-value queries.

- Batch (overnight): Long context with prompt caching is cost-effective.

Factor 5: Data Freshness

- Real-time updates (live docs, chat logs): RAG wins — incremental reindexing is free.

- Static corpora (policies, manuals, codebases): Long context with KV-cache amortization is viable.

The Copy-Paste Decision Matrix

Copy this table into your architecture doc and fill it out for your use case:

| Factor | Your Value | Score (1-5) | Weight | Weighted |

|--------|-----------|-------------|--------|----------|

| Corpus size (<100K=5, >1M=1) | | | 0.25 | |

| Relevance ratio (>50%=5, <10%=1) | | | 0.25 | |

| Query volume (<100/mo=5, >10K=1) | | | 0.20 | |

| Latency SLO (<2s=1, async=5) | | | 0.15 | |

| Data freshness (static=5, live=1) | | | 0.15 | |

| **Total** | | | **1.0** | |Score interpretation:

- >4.0: Long context preferred for most queries

- 2.5-4.0: Hybrid routing needed

- <2.5: RAG should be your default

The Hybrid Architecture (Current Best Practice)

The most cost-effective pattern emerging in 2026 production deployments is a three-tier routing system.

Tier 1: Query Classifier

A simple rule-based or LLM classifier that determines the query type:

def classify_query(query: str, num_docs: int) -> str:

"""

Classify a query into one of three types.

When to use: Route every incoming query through this classifier

before deciding how to answer it.

When NOT to use: If all your queries are the same type (e.g.,

all factual lookups), skip the router and go straight to RAG.

"""

# Signal 1: Implicit exploration (no specific fact to find)

implicit_signals = [

"what are the most concerning", "summarize",

"what's the overall", "give me an overview",

"key findings", "main points"

]

# Signal 2: Specific fact lookup

has_specific_target = any([

kw in query.lower() for kw in [

"how much", "when did", "who said",

"what is the", "where does", "how many"

]

])

# Signal 3: Cross-document synthesis

needs_synthesis = "compare" in query.lower() or \

"difference between" in query.lower() or \

"versus" in query.lower() or \

"vs" in query.lower()

if any(s in query.lower() for s in implicit_signals) and num_docs > 5:

return "long_context" # Global understanding needed

elif needs_synthesis and num_docs <= 10:

return "hybrid" # RAG + LC combination

else:

return "rag" # Default to cost-efficient retrievalTier 2: Smart Retrieval

For RAG and hybrid routes:

Vector search (50 candidates)

→ Reranker (top 10)

→ Place top score FIRST, second top LAST

→ Place remaining 8 in the middle

→ Inject user question right before the answer slotThis edge-placement strategy alone recovers 10-15% of lost-in-the-middle recall with zero extra cost.

Tier 3: Context Budget

Don’t over-fetch. An order-preserving approach with 48K well-chosen tokens outperforms full-context retrieval at 117K tokens by 13 F1 points — at roughly one-seventh the token budget.

Where Long Context Actually Wins

Despite the cost and latency, there are clear cases where long context is the right tool:

- Global document understanding — legal contract reviews, codebase audits, spec-vs-implementation validation

- Implicit queries — “what are the most concerning parts?” requires the model to read everything

- Multi-hop reasoning across a single corpus — chunking risks separating related facts that the model needs to connect

- One-off analytical tasks — due diligence, research synthesis, competitive analysis (low volume, high value)

- Output-heavy generation with DeepSeek V4 Pro — 384K output ceiling enables single-shot long-form drafting

The Verdict

Long context is a specialized tool, not a general-purpose replacement for RAG. It shines for tasks requiring global document understanding, implicit exploration, or one-shot analysis of small-to-medium corpora. It fails for high-volume, low-latency, or fact-specific queries — which is 80%+ of production workloads.

What to do today:

- Default to RAG for any knowledge retrieval pipeline. It’s 1,250x cheaper, 30x faster, and more reliable for specific fact queries.

- Add semantic caching — up to 73% cost reduction on repeated queries.

- Reserve long context for global understanding tasks and low-volume analytical work.

- Build a query router — a simple rule-based classifier handles 90% of routing decisions correctly.

- Benchmark on YOUR data — NIAH scores don’t predict production performance. Run a 40-line eval script (see below) on your actual query distribution.

The Bottom Line

If you’re dumping everything into one prompt because it’s easier than building RAG, you’re paying 1,250x more to get worse answers. Long context is a strategic tool for specific use cases — not a replacement for retrieval architecture.

Quick Eval Script

Copy this to test lost-in-the-middle on your own model and data:

# run_lost_in_middle.py — Run this on YOUR data

# Usage: python3 run_lost_in_middle.py --model claude-sonnet-4-5 --num_chunks 200

import argparse, random

from anthropic import Anthropic

parser = argparse.ArgumentParser()

parser.add_argument("--model", default="claude-sonnet-4-5")

parser.add_argument("--num_chunks", type=int, default=200)

args = parser.parse_args()

client = Anthropic()

DISTRACTOR = "The harbor district was quiet... Fishermen left at dawn."

NEEDLE = "The archive code for the 1973 harbor renovation is K-7421-Q."

QUESTION = "What is the municipal archive code for the 1973 harbor renovation?"

GOLD = "K-7421-Q"

positions = [0, args.num_chunks // 4, args.num_chunks // 2,

3 * args.num_chunks // 4, args.num_chunks - 1]

for pos in positions:

chunks = [DISTRACTOR] * args.num_chunks

chunks[pos] = NEEDLE

context = "\n\n".join(chunks)

msg = client.messages.create(

model=args.model, max_tokens=128,

messages=[{"role": "user", "content": f"Read this and answer.\n\n<passage>\n{context}\n</passage>\n\nQuestion: {QUESTION}"}]

)

hit = GOLD in msg.content[0].text

print(f"Position {pos}/{args.num_chunks}: {'HIT' if hit else 'MISS'} (accuracy target: {'edges' if pos in [0, args.num_chunks-1] else 'middle'})")Run this on 3 different model snapshots before making routing decisions. The results will surprise you.

← Back to all posts